LSTM & GAN

Manual LSTM gates for palindrome sequence modeling · GAN for MNIST generation

A hands-on deep learning project built around two classic modeling tasks: implementing an LSTM cell manually for palindrome sequence prediction, and training a fully connected GAN on MNIST with latent-space interpolation experiments. Both components are implemented close to the metal — no high-level API wrappers — to demonstrate understanding of the underlying mechanics.

Highlights

- Implemented LSTM gating logic from scratch (input, forget, cell, output gates; hidden and cell state updates) without using

torch.nn.LSTM - Validated the custom LSTM on palindrome sequences of varying lengths, analyzing how prediction accuracy degrades as sequence length increases

- Built a fully connected GAN for MNIST from scratch: generator with BatchNorm and

Tanh, discriminator with LeakyReLU and sigmoid, alternating adversarial optimization loop - Conducted latent-space interpolation experiments to verify continuity in the generator’s learned representation

- Preserved training artifacts throughout: staged sample grids, loss curves, accuracy plots, a serialized generator checkpoint, and a written technical report

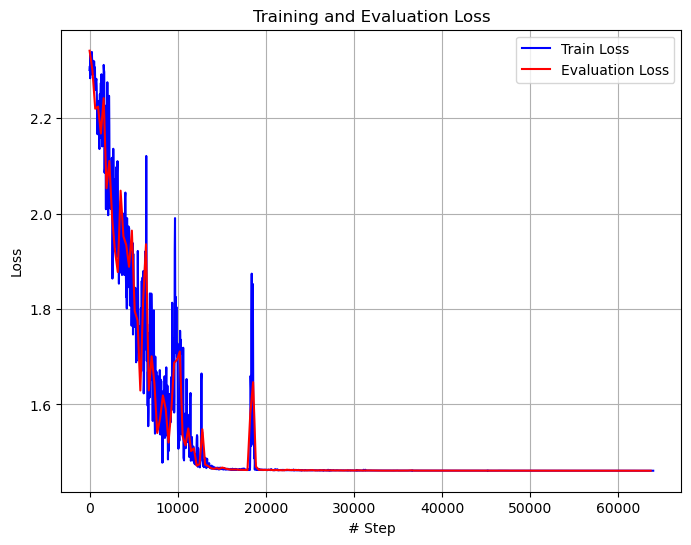

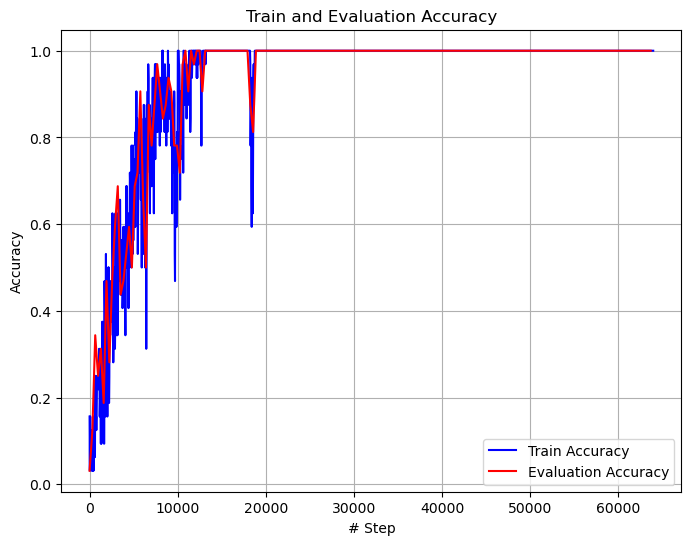

Part 1 — Manual LSTM for Sequence Modeling

The LSTM is implemented in lstm.py without torch.nn.LSTM. All four gates and the cell/hidden state update equations are written explicitly with nn.Linear layers, making the recurrent logic fully transparent.

Training setup: palindrome dataset with configurable sequence length, gradient clipping, per-epoch loss and accuracy tracking.

The model handles shorter and medium-length palindromes reliably and degrades more gradually than a basic RNN as sequence length grows, reflecting the gating mechanism’s role in preserving long-range information.

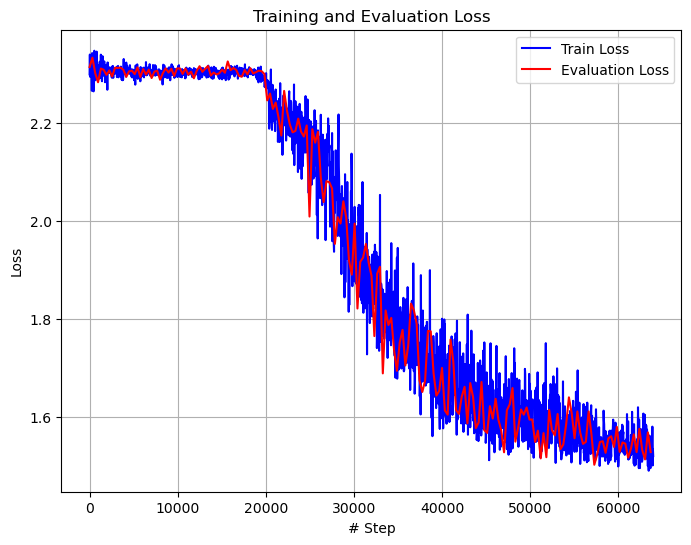

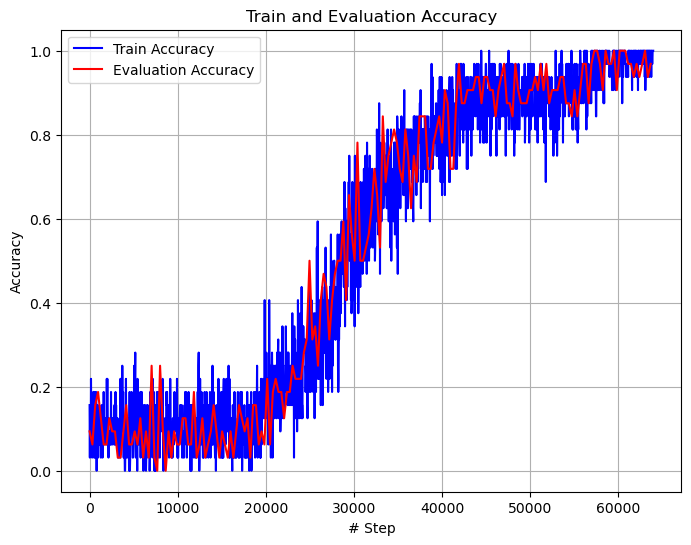

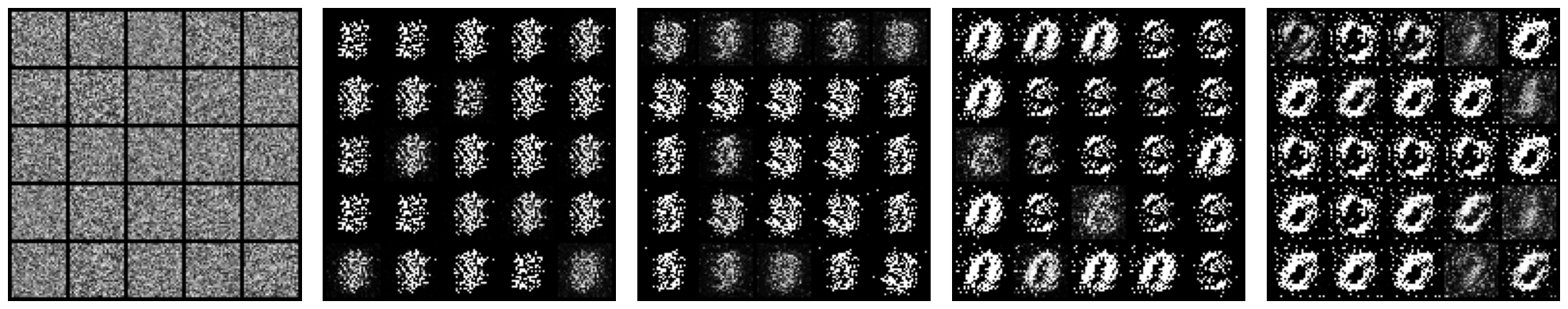

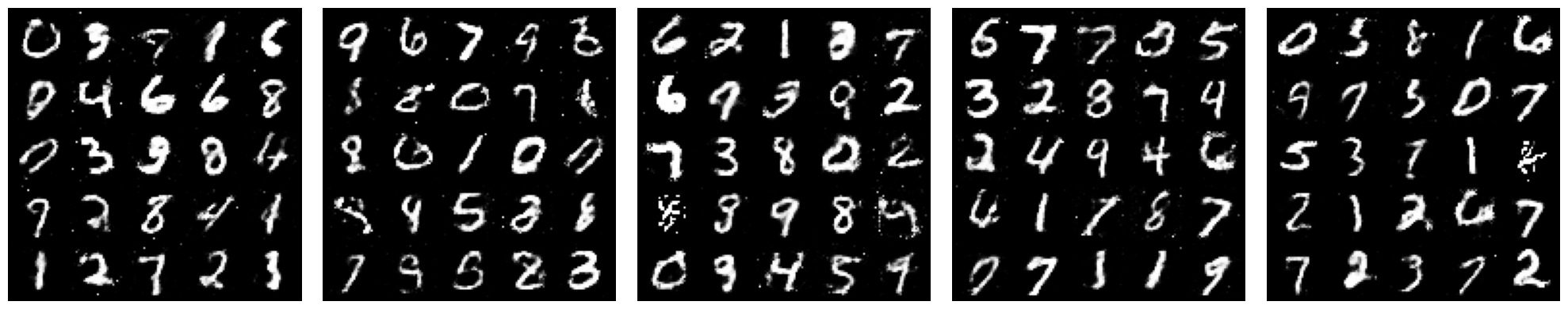

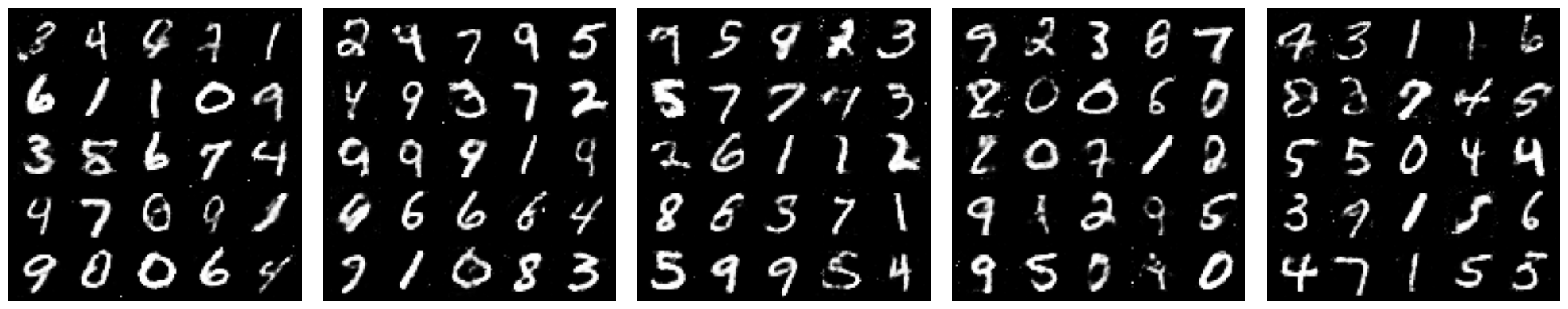

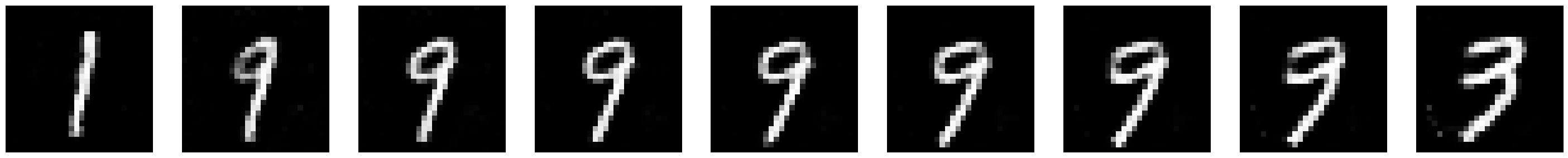

Part 2 — Fully Connected GAN on MNIST

A multilayer perceptron GAN trained on MNIST using a standard generator-discriminator adversarial setup:

- Generator — stacked linear layers, BatchNorm, LeakyReLU activations,

Tanhoutput - Discriminator — linear layers, LeakyReLU, sigmoid output

- Training — alternating generator and discriminator updates with BCE loss; sample grids saved at regular intervals

Using fully connected layers (rather than convolutions) makes the training objective and model behavior easier to inspect at each stage.

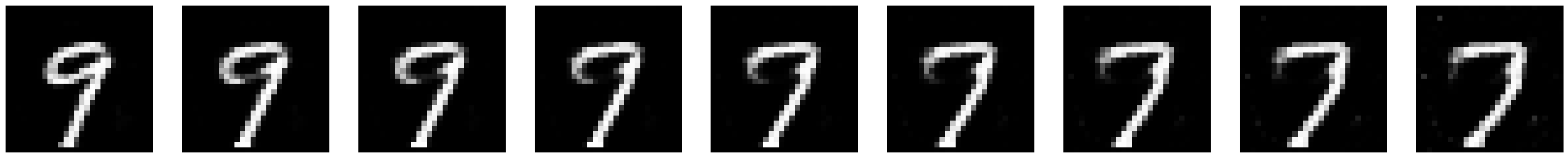

Latent-Space Interpolation

Interpolating between pairs of latent vectors tests whether the generator has learned a smooth, continuous representation or simply memorized discrete samples.

Technical Summary

| Language | Python 3 |

| Framework | PyTorch |

| Models | Manual LSTM, fully connected GAN (MLP) |

| Tasks | Sequence modeling, image generation, latent interpolation |

| Key components | Custom gate equations, adversarial training loop, BatchNorm, gradient clipping |

| Artifacts | Loss/accuracy curves, staged sample grids, generator checkpoint, written report |