Multi-Weather Segmentation

[PyTorch] CARLA-simulated multi-weather dataset · DeepLabV3 · Autonomous driving perception

A research project investigating semantic segmentation robustness across diverse weather conditions for autonomous driving. Using the CARLA simulator, we collected a 3,600-image dataset spanning 9 weather-time combinations and systematically evaluated three training strategies — from clear-only baseline to weather-aware curriculum learning — on a DeepLabV3 backbone with 19 CityScapes-standard classes.

Highlights

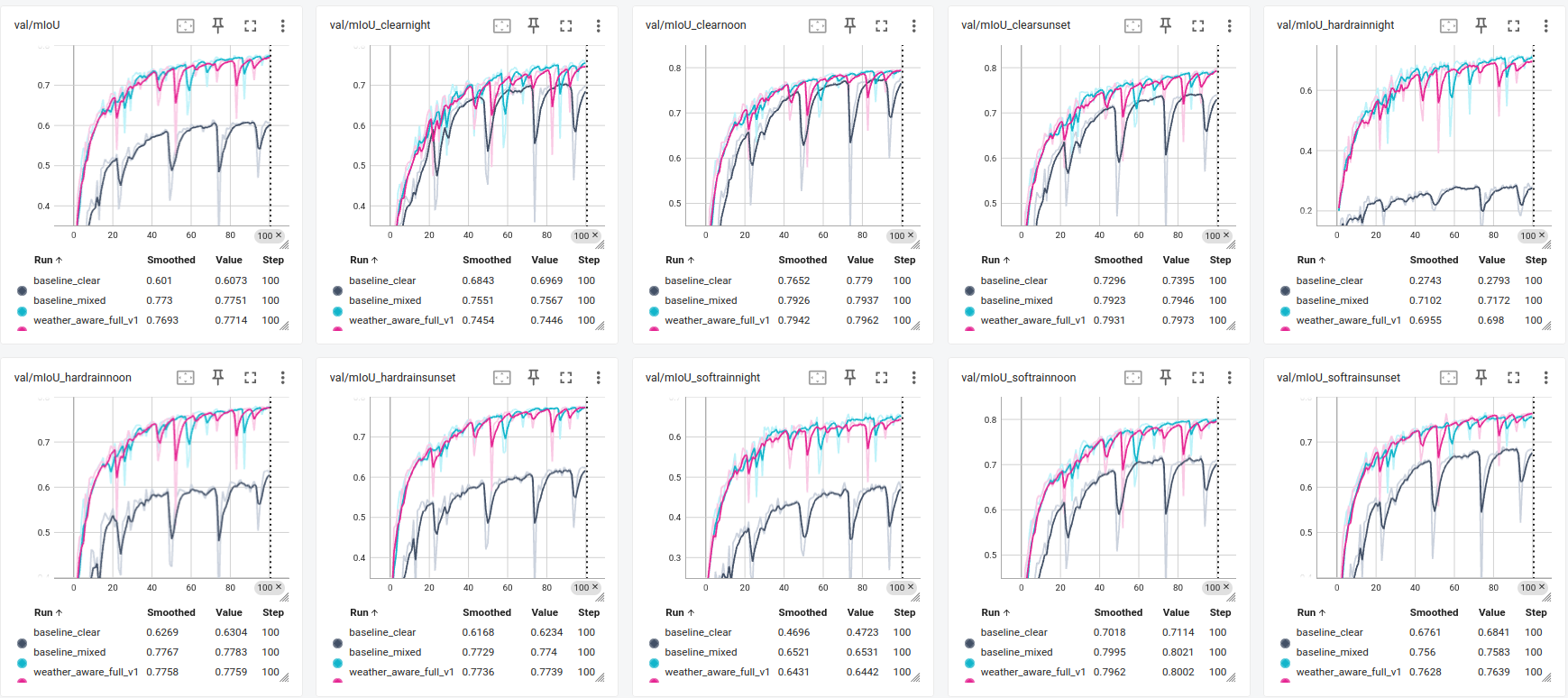

- Designed Weather-Aware Curriculum Learning (3-stage progressive training): clear → clear+soft rain → all 9 conditions with 10:20:70 hard-rain-biased sampling, boosting avg mIoU from 0.614 to 0.776+ over clear-only baseline

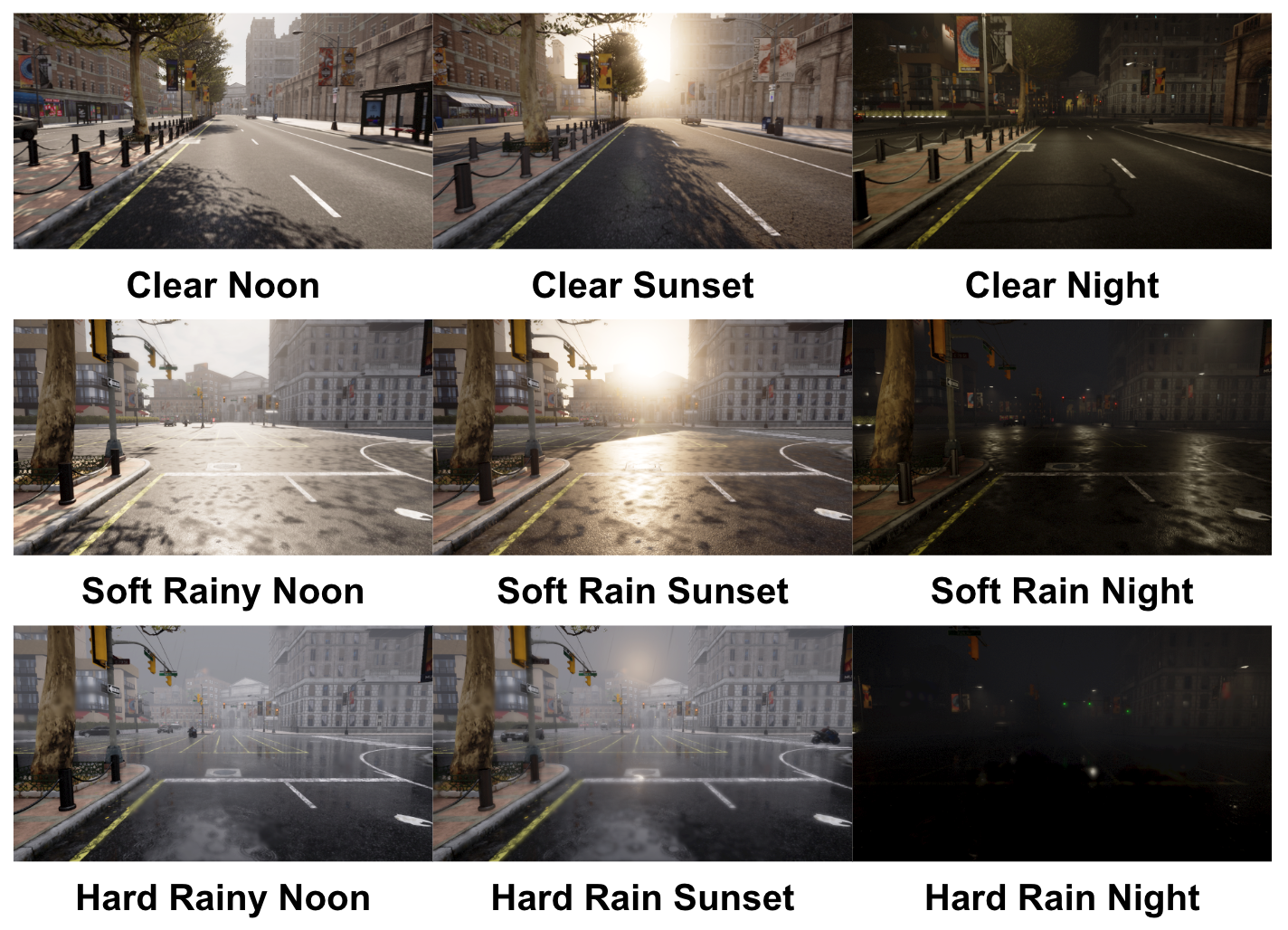

- Built custom CARLA data collection pipeline: ego vehicle + autopilot traffic, 512×512 camera at 1.6m height, 9 weather-time combos (Clear/SoftRain/HardRain × Noon/Sunset/Night), 3,600 annotated frames total

- Clear-only baseline degrades severely under domain shift — mIoU drops from 0.779 (ClearNoon) to 0.291 (HardRainNight); curriculum learning maintains ~0.7+ across all conditions

- Evaluated per-weather mIoU across all 9 conditions: curriculum training shows consistent robustness gains, especially on rare hard-rain nighttime scenarios where clear-only fails catastrophically

- Weighted sampling in Stage 3 (10:20:70 ratio) explicitly counteracts class imbalance between easy and safety-critical hard-rain samples

Training Strategies

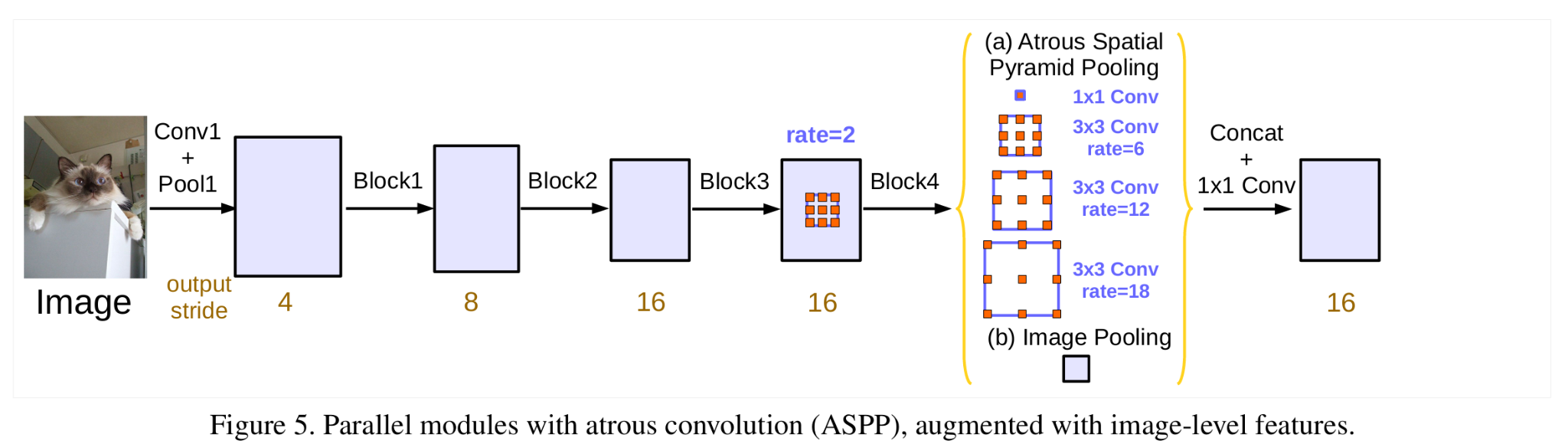

Three strategies compared on DeepLabV3 with ResNet-50 backbone, pre-trained on COCO 20-class subset:

| Strategy | Description | Avg mIoU |

|---|---|---|

| Setting 0 — Clear-Only | Trained on clear weather only | 0.614 |

| Setting 1 — Mixed-Weather | Random mixing of all 9 conditions per batch | 0.776 |

| Setting 2 — Curriculum Learning | Progressive 3-stage training with weighted sampling | ≥ 0.70 (all conditions) |

The curriculum approach avoids the instability of direct multi-weather training while preventing the clear-weather bias of setting 0.

Dataset

Model Architecture

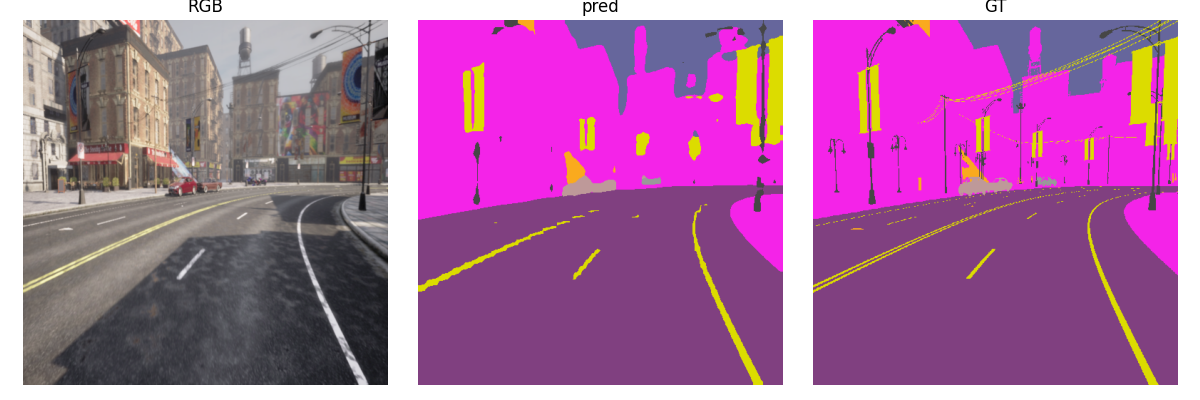

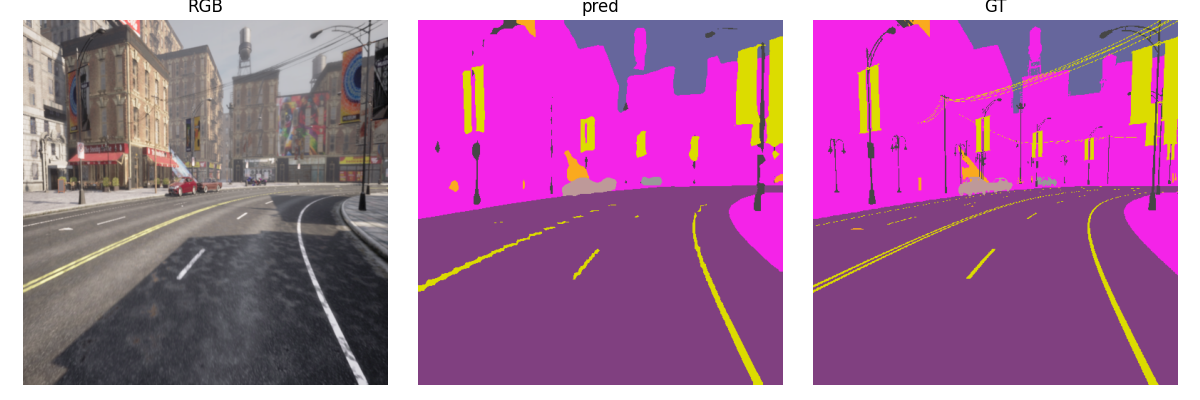

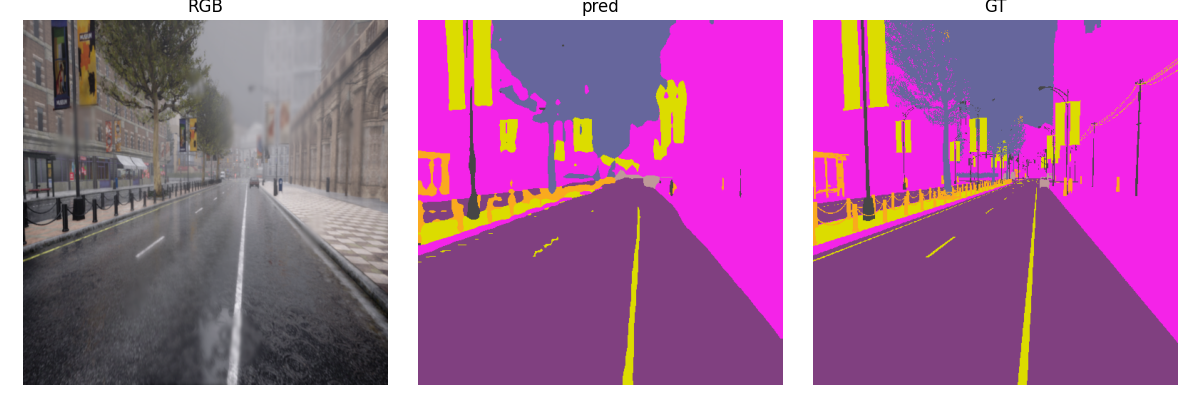

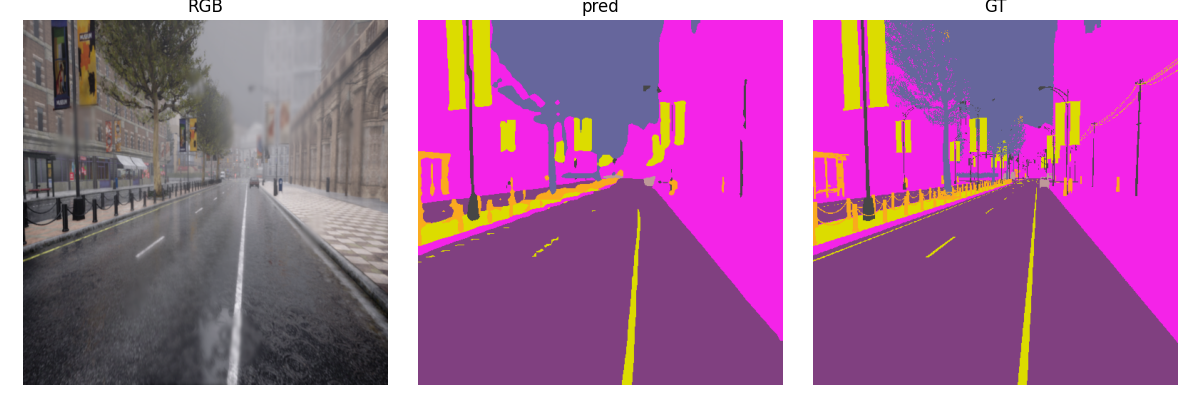

Qualitative Results

Each row shows RGB input / Model Prediction / Ground Truth. Comparing Clear-Only (Setting 0) vs. Curriculum Learning (Setting 2) on adverse weather conditions:

Soft Rain — Noon

Hard Rain — Noon

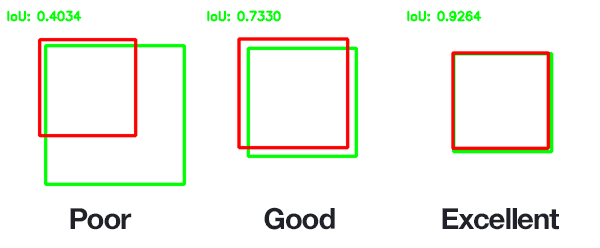

Quantitative Results

Technical Summary

| Language | Python 3.10 |

| Model | DeepLabV3 (ResNet-50 backbone, COCO pre-trained) |

| Task | 19-class semantic segmentation across 9 weather conditions |

| Dataset | 3,600 CARLA-simulated images, 512×512, ground-truth masks |

| Best avg mIoU | 0.776 (Mixed) vs. 0.614 (Clear-Only baseline) |

| Framework | PyTorch 2.7.1, CUDA 12.8 |

| GPU | NVIDIA RTX 5080 (16 GB VRAM) |

| Simulator | CARLA 0.9.15 |