SEM Analysis Pipeline

[PyTorch] SEM image dataset of ancient bronze molds · SAM zero-shot segmentation · VGG classification · Applied to archaeological research

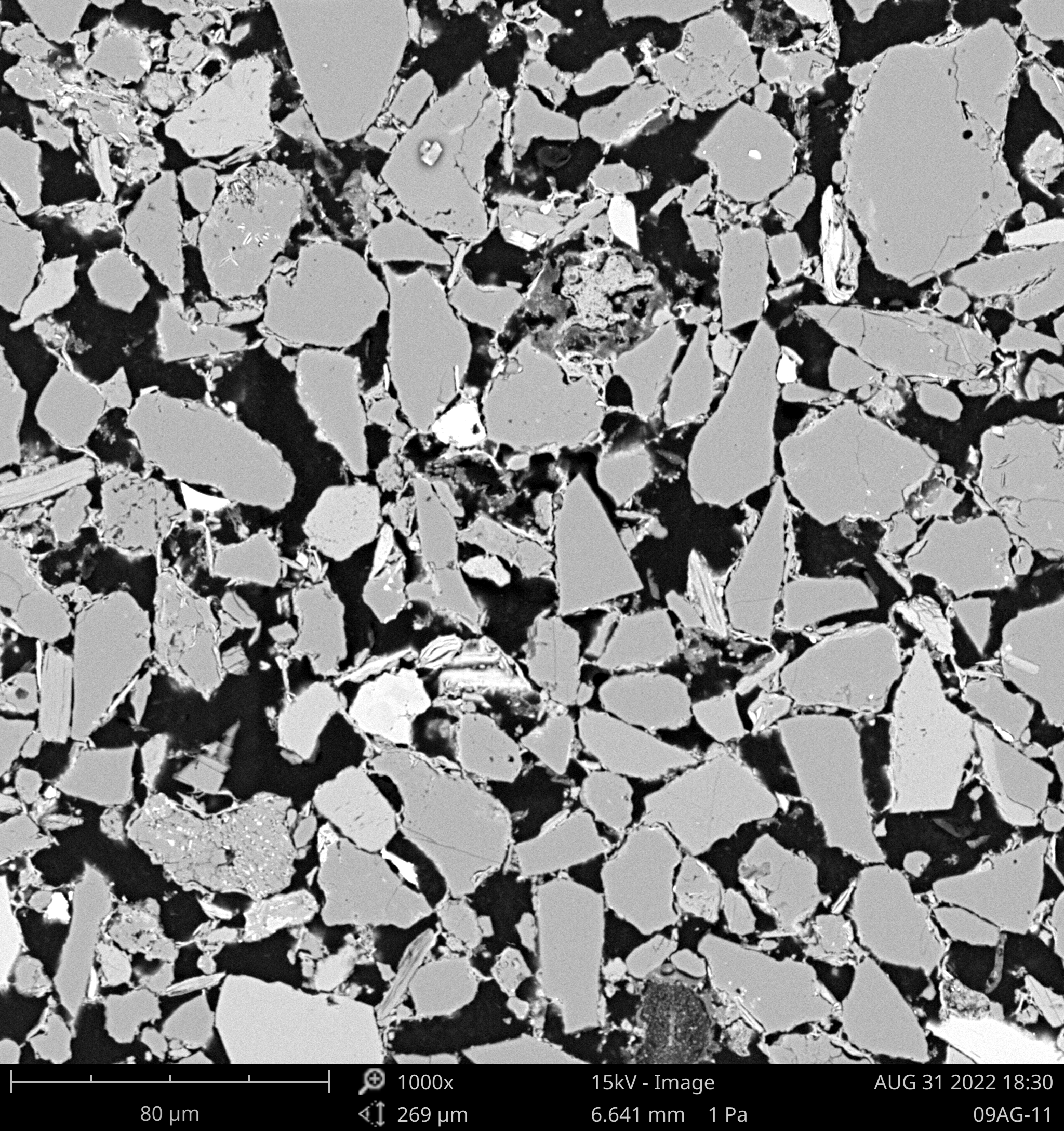

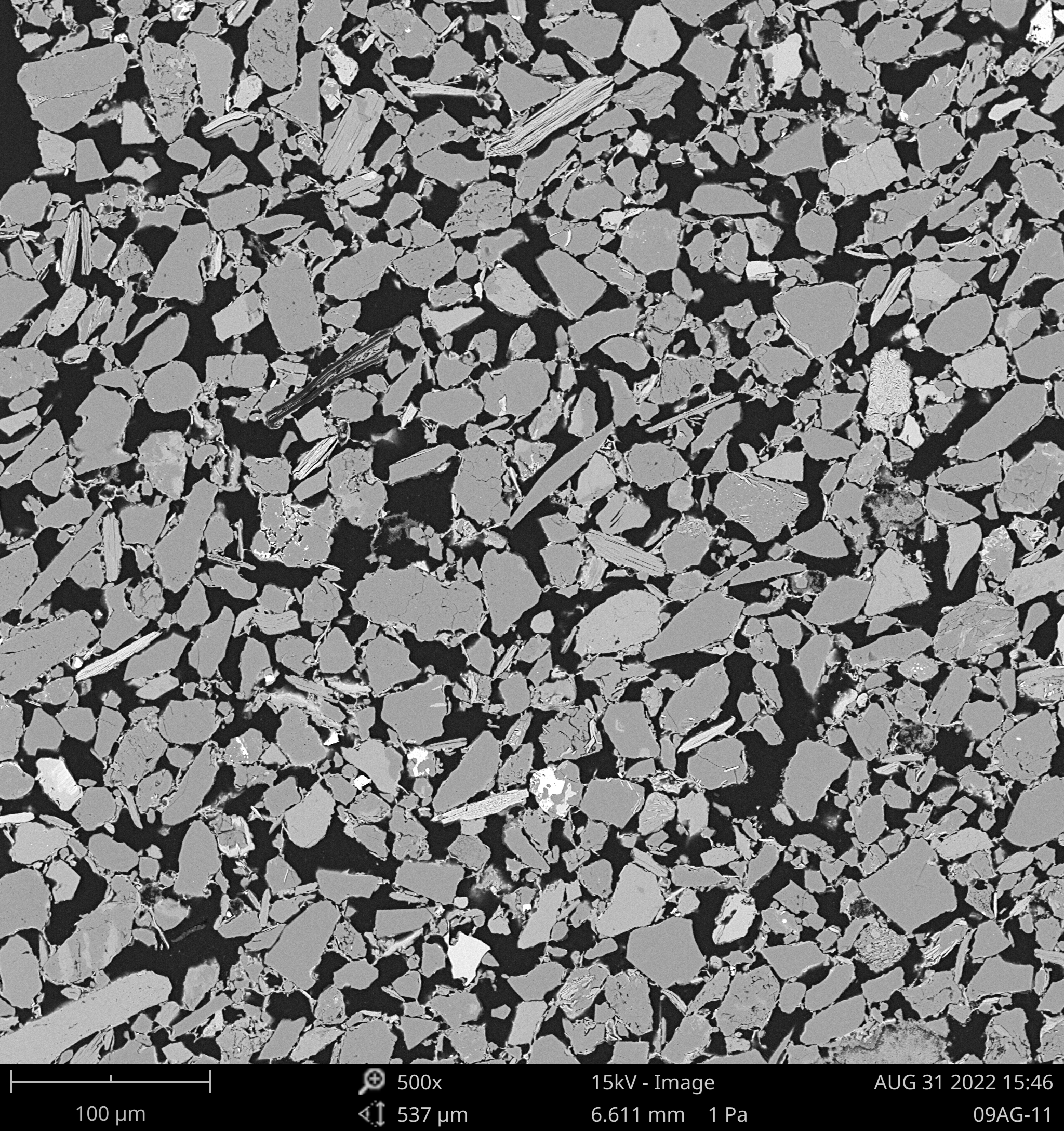

A full-stack computer vision system for analyzing the microstructure of ancient Chinese bronze ware molds (土范) from scanning electron microscope (SEM) images. Built for Prof. Jing Zhichun (荆志淳) at SUSTech’s Institute for Advanced Study in Social Science, the pipeline applies SAM-based zero-shot particle segmentation and VGG-B classification to identify three material components — gravel (沙砾), clay (黏土), and cavities (空腔) — and produces quantitative composition reports. Results were directly applied to ongoing archaeological research on Shang Dynasty bronze casting techniques.

Highlights

- Applied SAM (ViT-H, Meta’s largest checkpoint) for zero-shot particle segmentation — no domain-specific retraining; IoU/stability thresholds tuned for SEM material contrast

- Implemented VGG-B for three-class mask classification: gravel (沙砾) / clay (黏土) / cavity (空腔) — 92% test accuracy; linear layer trimmed for inference efficiency

- Designed full pipeline: sliding-window crop (224×224) → SAM segmentation → mask extraction → VGG classification → color overlay reconstruction → confidence + area statistics

- Built a custom dataset from real SEM specimens provided by Prof. Jing Zhichun, covering 500×–2000× magnification across multiple archaeological sites and dynasties

- Delivered quantitative composition output per image: per-class area pie chart and confidence distribution, directly usable as archaeological evidence

- Full-stack web app (Vue.js + Flask) with live drag-and-drop SEM upload, dynamic colored mask overlay, and auto-generated analysis dashboard

Pipeline Overview

The pipeline processes any SEM image through four stages:

- Sliding-window crop — input image split into 224×224 tiles (no overlap, no gaps) for memory-efficient SAM processing

- SAM segmentation — ViT-H SAM generates all particle masks per tile; parameters tuned (

pred_iou_thresh=0.94,stability_score_thresh,min_mask_region_area=1024) for SEM contrast - VGG-B classification — each SAM mask region is extracted from the original image and classified as gravel, clay, or cavity; masks colored by category

- Result reconstruction — tiles stitched back; colored masks alpha-composited over original; confidence and area statistics computed

Segmentation Results

SEM images of bronze mold specimens, after processing through the SAM → VGG pipeline. Colors represent classified material: red = gravel (沙砾), green = clay minerals (黏土), blue = cavities (空腔).

Web Application

The frontend (Vue.js + Vite) exposes three sections:

- Pipeline visualization — carousel walkthrough of the processing stages for non-technical stakeholders

- Static gallery — curated processed specimens; click to expand original → segmented overlay comparison

- Live demo — drag-and-drop SEM image upload; backend processes and returns: SAM overlay image, three per-class mask images, confidence distribution chart, area pie chart per category

The Flask backend wraps the full pipeline as REST endpoints (/upload, /img/<id>, /process/<id>), with results cached server-side per image ID.

SEM Dataset

The dataset consists of real SEM images of excavated bronze mold specimens provided by Prof. Jing Zhichun, covering mold materials from multiple archaeological sites and time periods. Images span 500×–2000× magnification. A second set of reference specimens (UBC series) provides comparative clay samples for cross-site analysis.

Technical Summary

| Language | Python 3, JavaScript |

| Models | SAM (ViT-H, Meta), VGG-B (PyTorch) |

| Task | 3-class microstructure segmentation + classification (gravel / clay / cavity) |

| Accuracy | 92% test accuracy (VGG-B on classified masks) |

| Frontend | Vue.js, Vite |

| Backend | Flask, OpenCV, NumPy |

| Dataset | Real SEM images at 500×, 1000×, 2000× — provided by SUSTech archaeology lab |

| Application | Prof. Jing Zhichun — SUSTech Institute for Advanced Study in Social Science |

| GPU | ~7 GB VRAM (SAM ViT-H inference) |